Managed Cloud Hosting

Dedicated CPU & RAM for speed, uptime and performance.

When should you upgrade from Shared to Cloud?

Cloud handles sudden traffic without performance drops.

Isolated cloud infrastructure improves reliability.

Higher inode and resource limits support growth.

Dedicated CPU, RAM, and NVMe—no noisy neighbors.

Predictable Cloud Pricing. Same Price at Renewal.

30-day money-back guarantee

Free expert website migration

Free domain & SSL included

30-Day Money Back

request a refund

— we’ll process it promptly withno questions asked

.

Customer success stories

MilesWeb Cloud or VPS — Choose What’s Right for You

Compare performance, control, and peace of mind — side by side.

| Features |

| Recommended For |

| 24/7 Expert Support |

| Control Panel |

| Server Security Suite |

| LiteSpeed Web Server |

| Backups |

| CDN |

| Migration Support |

| SSL Certificates |

| Monthly Price |

| Cost Including All Features |

| MilesWeb Cloud |

| Growing websites and online stores |

| Yes |

| Included |

| Included |

| Yes |

| Daily backups |

| Included |

| Free |

| Free |

| ₹1,499.00 |

Get Started |

| MilesWeb VPS |

| Server admins and technical users |

| Chargeable |

| Add-on (₹1999/mo) |

| Add-on (₹2499/mo) |

| Add-on (₹2700/mo) |

| Add-on (₹1400/mo) |

| Add-on (₹2500/mo) |

| Not included / Partial support |

| Manual configuration required |

| ₹11,947.00 |

Stress-free hosting. Proactive support.

Real humans. Fast answers. Support in English or Hindi, 24/7.

24/7 support in English & Hindi

Live chat, email, and ticket assistance — backed by real hosting experts.

Fast & friendly responses

Average live chat response time: under 30 seconds.

What our team helps with

- WordPress plugin & theme conflicts

- Hacked or broken website recovery

- Performance tuning on request

- Initial website setup & launch

MilesWeb vs Others: Who delivers more value?

We’ve compared MilesWeb with leading cloud hosting providers to help you make the smarter choice.

| Company origin: India |

| Price |

| Offer price |

| Renewal price |

| Plan term |

| Total 4-Year Cost |

| Resources |

| Websites |

| RAM |

| CPU Cores |

| Inodes (Files) |

| NVMe Storage |

| Features |

| Email Accounts |

| Daily Backups |

| Web Application Firewall |

| Instant Malware Removal |

| Support |

| 30-Day Money Back |

| Free SSL & Domain |

| Limit & Performance |

| Subdomains |

| Traffic Handling |

| Databases |

| MySQL Max Connections |

| Migration |

| Email Migration |

| Application Migration |

| ₹449.00/mo |

| ₹449.00/mo

(Same price at renewal)

|

| 36 / 48 Months |

| ₹23,952.00 |

| 100 |

| 5 GB |

| 5 Cores |

| 30,00,000 |

| 150 GB |

| 150 Emails |

| 24/7 Human Support (EN + Hindi) |

| Unlimited |

| 2,25,000 Visits |

| Unlimited |

| 125 |

|

Get Started

|

|

|

| ₹599.00/mo |

|

₹1,599.00/mo

(+191% hike)

|

| 48 Months |

| ₹26,352.00 |

| 100 |

| 4 GB |

| 4 Cores |

| 20,00,000 |

| 100 GB |

| Free 1 Year, then Paid |

| 24/7 Bot Support |

| 300 |

| 2,00,000 Visits |

| 300 |

| 100 |

Prices are indicative and based on publicly available information at the time of comparison. Actual prices may vary depending on plan duration, offers, region, and applicable taxes.

Switch to MilesWeb with zero downtimeSwitch to MilesWeb. Zero Downtime.

Our experts migrate your site for free — no data loss, no hassle.We migrate your website for free—no data loss, no hassle.

- Free website migrations by qualified experts

- No downtime during the actual transfer

- No data lost or links broken during the migration

- Support for WordPress and CMS

- Comprehensive post-migration testing

- Free expert migration

- No downtime guaranteed

- 100% data safe

Free migration • Zero downtime

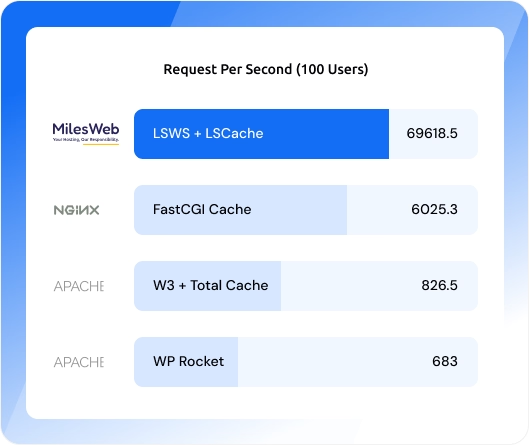

Achieve faster load times with LiteSpeed web server + LSCache

- Good website speed = good business results.

- Enjoy faster performing websites and loading times with extremely powerful LiteSpeed Web servers.

- Improve core web vital score, (SERP) ranks and overall user experience of your website with LiteSpeed Cache.

- Get a significant enhancement in server response time and reduced latency with MilesWeb’s high-speed servers powered by LiteSpeed.

Best managed cloud server with simplicity, scalability & security

Embrace the agility of cloud hosting server features, where flexibility meets performance to fuel your digital innovation.

Scale for excellence

Start small and scale instantly with the best cloud hosting services in India, adding resources with one click for effortless, long-term growth.

Instant setup & website launch

We get instantly activated managed cloud hosting services with expert-assisted setup, speed, security, free SSL, and on-demand backups.

WordPress 1-click staging

Safely test themes, plugins, and updates in staging, then go live in one click without risking your main WordPress site.

Built-in caching boost

Enjoy faster load times and better SEO with integrated cache management on every managed cloud hosting plan.

Affordable high performance

Get powerful cloud hosting resources that grow with your business while keeping costs low for maximum value.

Best control panel

Manage your cloud easily with an intuitive, user-friendly control panel that removes technical complexity and saves your time.

Hear what our customers have to say

We highly appreciate the kind and stellar feedback from our customers immensely.

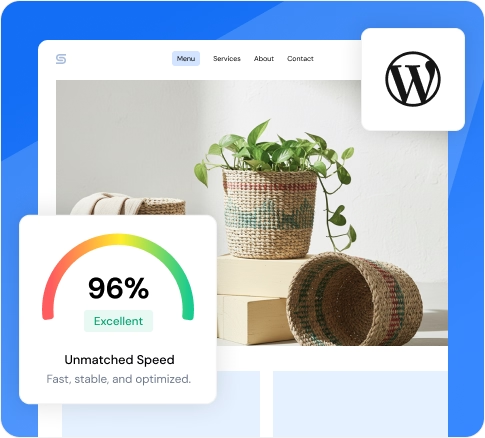

Dominate the web with blazing fast cloud speed

Global speed with unmatched performance

Make your websites reach a worldwide audience with our extensive network connections. Get access to a global reach and experience unparalleled website performance. Enjoy lightning-fast site loading speeds and an exceptional user experience for your visitors with our global data center locations.

Search engine optimization made easy

We understand that SEO optimization is the power of the website. Google and other search engines rank your websites at the top if they are optimized perfectly. We help you boost your website by optimizing it with our cutting-edge technology and eliminating slow load times.

Say hello to increased sales

Don't let slow loading times steal your hard-earned traffic. We promise to deliver your website loads at record speed, keeping visitors engaged and driving conversions. Now, say goodbye to slow loading speeds, slow website response, and lost sales.

Get the instant WordPress launch platform

We provide our pre-configured platform, designed specifically to launch your WordPress websites instantly. With effortless management, full-page caching, quick page optimizations, and smart routing, you get to experience lightning-fast efficiency.

Unparalleled speed with our enterprise-grade CDN

Be sure to deliver lightning-fast speed to your audience worldwide. With our blazing CDN, your website experiences near-instantaneous page loads. Your websites are copied and distributed across the globe by eliminating costly and cumbersome plugins.

Cloud server hosting that’s an all-in-one solution

Whatever you are working on, the powerful managed cloud servers can take your project to new levels. The possibilities are endless.

Cloud hosting for eCommerce

Set up your online shop knowing that you can handle the sudden traffic spikes, no sweat.

Excellent for WordPress sites

MilesWeb provides reliable managed cloud hosting for WordPress, making it easy to host and scale your website.

IT infrastructure

A flexible and high-performance solution for all your IT infrastructure, especially for larger businesses.

Software development

Best cloud server in India with fully flexible and scalable resources allows you to develop software that grows with your project.

Cloud hosting services FAQs

What is cloud hosting?

Cloud hosting pairs the performance of VPS hosting and the ease of shared hosting. It uses the interconnected nodes of remote servers for fast-paced operations and data redundancy.

This distributed mechanism of resources, such as CPU, RAM, and storage, ensures that there is no single point of critical failure that renders the website down during traffic surge.

Our cloud hosting serves as the premier infrastructure option for Indian businesses (e-commerce, emerging startups, and high-traffic blogs) looking to scale their digital footprint.

What are the key benefits of cloud hosting?

Here are a few key benefits of our feature-rich cloud hosting:

Fully Managed Infrastructure: Focus on your business goals by passing on administrative task overhead to our engineers. Our team administers tasks such as real-time monitoring, security patches, and maintaining server hardware.

Intuitive Control Panel Interface: MilesWeb seamlessly manages backend administration functions through an easy-to-use control panel featuring granular control.

Performance-Oriented Features: We manage a suite of premium features maximizing your online potential with performance optimization features, featuring a free domain, integrated SSL, daily backups, LiteSpeed, and LSCache advancements.

What is the difference between cloud hosting, shared hosting, and VPS?

Shared hosting is the most affordable way to get online, where your server resources are shared, so is the cost. It is an ideal entry-level hosting solution for bloggers, startups, and medium-sized websites.

VPS servers grant full root access and dedicated resources, offering granular control over the server environment. This solution is engineered for technically proficient users who demand the power of dedicated servers within a virtualized framework.

Cloud hosting offers the power of state-of-the-art dedicated resources (CPU, RAM, & bandwidth) and seamless scalability through a fully-managed platform. They are built for businesses demanding exponential growth in the future.

When should I upgrade to cloud hosting?

Cloud hosting seamlessly adapts to the growing traffic demand on websites. In this, servers are designed to handle peak performance if your website experiences regular traffic influx. The agile infrastructure manages a load of resource-heavy marketing campaigns, ensuring fast processing power exactly when you need it most.

Our cloud infrastructure is configured for near-zero downtime, laying a strong foundation to elevate your business’s digital landscape.

What is the cost of cloud hosting in India?

Cloud hosting prices vary depending on the hosting types and the website’s requirements. With this, startups and e-commerce websites get enterprise-grade dedicated resources starting at ₹499 per month.

For dynamic and high-traffic websites, premium configurations are available for ₹1,699 per month, ensuring accelerated performance, storage, and features.

We offer scalable cloud hosting plans with monthly, annual, and triennial billing cycles aligned with your budgetary and growth standards.

What is the main difference between a cloud server and cloud hosting?

Cloud computing and cloud servers utilize the same technology for data processing and unmatched operational needs. Cloud servers offer dedicated resources with a virtualization model offering full root access and granular control. Hence, it is an ideal hosting option demanding higher autonomy and investment without specialized knowledge.

Conversely, cloud hosting is an optimized and managed environment, ensuring seamless scalability of your websites. MilesWeb facilitates this by handling backend server administration tasks, where users channel their efforts into business growth without worrying about server administration.

What support options are available with cloud hosting?

MilesWeb offers robust technical support to ensure your complete digital protection. Our secured cloud hosting plans are backed by priority 24/7 technical support from our elite tier of server administrators.

Regardless of its complex server environments or proactive security patches, our professionals are available through live chat and email, ensuring infrastructure remains optimized. You get the enterprise-grade support that stimulates innovation and sustains business continuity.

What payment options are available for cloud hosting in India?

MilesWeb empowers seamless payment options for Indian businesses. We offer a range of flexible payment methods for seamless transactions. Whether you prefer the speed of credit/debit cards or the simplicity of BHIM UPI, our payment gateway is designed for maximum protection. We integrate flexible payment options to meet the demand of agile businesses, supporting everything from traditional offline payments, such as checks, to digital wallets like PayPal. Our flexible billing cycle is perfectly synchronized with the growth trajectory of online businesses.

How is the infrastructure of MilesWeb’s cloud hosting?

Our cloud infrastructure has a built-in CloudLinux LVE offering an optimized experience through a containerized environment. The system allocates sovereign virtualized resources, preventing other users from impacting your performance, ensuring enhanced server security. This design resource-governed architecture ensures the website has a steady uptime and high performance, regardless of external servers' activity. This sophisticated isolation ensures your website remains secure, stable, and delivers a frictionless user experience.

How can I get assistance with my cloud hosting plan?

MilesWeb offers unwavering 24/7 customer support through our certified cloud specialists. We provide a top-notch customer experience, which solidifies our reputation as India’s leading web hosting provider. All questions raised through our multi-support channel are resolved promptly, providing actionable insights to maintain your website’s continuity.

Can I upgrade from my shared hosting plan to cloud hosting?

Yes, you can easily scale your digital footprint by upgrading from shared hosting to cloud hosting. Pay only the difference amount, and rely on cloud engineers for the rest of the transition process. For external users who are hosted elsewhere, we have a free migration service to our feature-rich cloud plans. Our cost-effective scalability metrics ensure you receive enterprise-grade resources at a competitive price point, laying a strong foundation for your business scalability.

When is the right time to opt for cloud hosting?

Cloud hosting offers a customized architecture for the growing digital demands of enterprises. Cloud architecture is the best choice, guaranteeing peak performance in high-traffic websites, resource-intensive projects, and e-commerce platforms.

Our cloud infrastructure in India is engineered with high-end scalability and multi-layered security. Its isolated environment ensures operations are not affected by traffic spikes.

Moreover, evaluate our free trial in cloud hosting to get firsthand experience of feature-rich cloud hosting designed for the prosperous digital growth of your business.

Try it risk-free with our 30-day money-back guarantee.