Haven’t artificial intelligence (AI) and machine learning (ML) turned the world upside down? Like literally!

You can do anything and almost everything with this artificial intelligence and machine learning stuff. You can make a machine talk, learn and do tasks on its own with artificial intelligence and machine learning.

Artificial intelligence has not only made machines mimic humans but also human intelligence. And that is a task!

Deep learning, a subfield of machine learning, has taken this mimicking to another level.

Ever watched a YouTube video titled “You Won’t Believe What Obama Says In This Video!” one of the viral videos on the internet spotted to be false was created by Jordan Peele using sophisticated AI and deep learning tools.

See that man? He looks like Obama, he sounds like Obama, but he wasn’t there in the video!

Tricky, right?

One more relevant event of Nancy Pelosi’s video slowing down is said to be manipulated but is not!

Isn’t all this fascinating?

Giving closure to all that you read so far, here is something more interesting I want to tell you is Deepfake made it all happen.

Deepfake made it possible for Jordan Peele to be Mr. Obama in the video.

From the narrations, you must have had the link of what Deepfake is all about.

Let’s dive into the details!

What is Deepfake?

Deepfake is a technique for creating a video that appears to be genuine, including realistic motions and audio. You might compare it to a mix of animation and photorealistic pictures. Deepfakes are created using an AI-based deep database that perfectly replicates people’s appearances and sounds to the point that they are difficult to distinguish from real things.

The term deepfake is derived from the words deep learning and fake. The deep learning portion is significant since it is the grounds for how deepfake is created.

A target is required to create a deepfake, like, we saw in the narration Mr. Obama was the target.

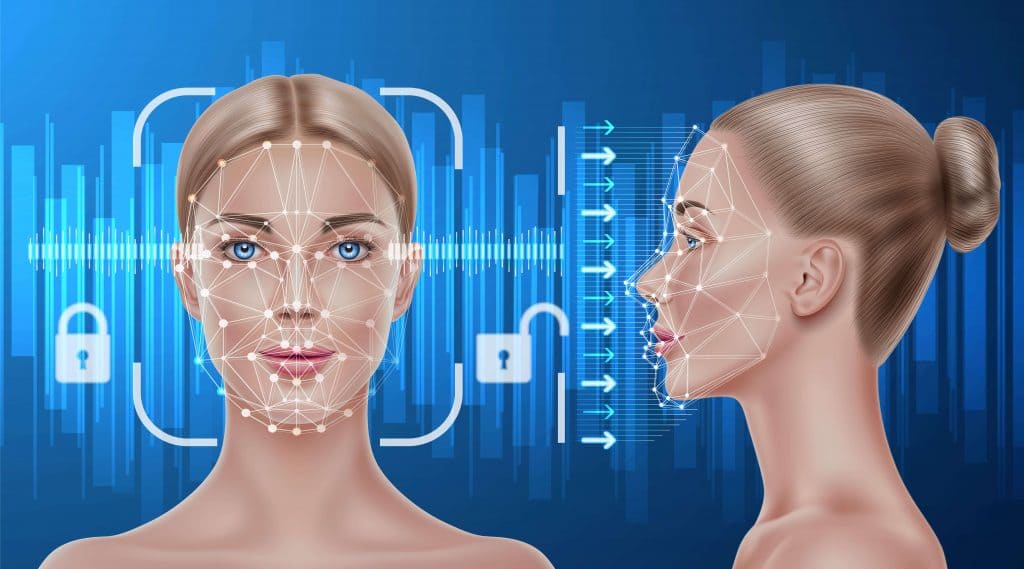

Other deepfakes may be created by training the AI to recognize a human face and then “face shifting” one face onto another. The method discussed is simple and faster for creating deepfakes, but it is also more prone to mistakes and inconsistencies, making the films more easily detectable as fakes.

If the voice sounds more like the target’s genuine voice, a deepfake can be more believable. By giving another AI a number of samples of someone’s speech to mimic it, creators may make synthetic sounds using the same technique.

History of Deepfake

The act of manipulating photos, films, and the changing of faces in photographs, is not new. However, the word deepfakes first surfaced in 2017 after a Reddit user named “deepfakes” published fake pornographic films. He just grafted the faces of superstars onto the bodies of others.

How Deepfake Works?

Deepfake uses two independent sets of algorithms that work together to make a video: the first creates the video, while the second attempts to evaluate whether the video is real or not. If the second algorithm detects a bogus video, the first algorithm attempts again, having learned what not to do from the second algorithm. And the two algorithms iterate until they produce a result that the programmers consider being suitably realistic.

How are Deepfakes Created?

Machine learning is the essential element of deepfake, and it has allowed them to be produced considerably faster and at a cheaper cost. To construct a deepfake video of someone, a developer first trains a neural network on several hours of genuine video footage of the person to give it a realistic knowledge of how he or she appears from various perspectives and lighting conditions. The trained network then combines with computer graphics methods to superimpose a person’s copy onto another actor.

While the incorporation of AI speeds up the process, it still takes time to create a plausible composite that sets a human in a totally imaginary setting. To avoid blips and artifacts in the image, the designer must manually alter several trained program settings. The procedure is far from simple.

Many people believe that generative adversarial networks (GANs), a type of deep-learning algorithm, will be the main engine of deepfakes development in the future.

Deepfake enables the creation of face-swap video in a few steps. To begin with, you must run millions of photos of the two persons through an encoder- an AI system. The encoder looks for and learns commonalities between the two faces, then reduces them to their shared characteristics, compressing the pictures. The faces are then recovered from the compressed photos using a second AI system called a decoder. You train one decoder to recover the first person’s face and another decoder to retrieve the second person’s face since the two faces are different. You send encoded photos into the wrong decoder to achieve the face swap.

Deepfake Websites

-

Deepfakes web β

Deepfakes web β is an online application that allows you to make deepfake videos. Deep learning is used to absorb the difficulties of face data. Deepfakes web β takes up to 4 hours to learn and train from video and photos, and another 30 minutes to swap the faces with the learned model.

-

MyHeritage

MyHeritage is another popular deepfake software worth checking out. Deep Nostalgia, a function that allows you to animate old images, has acquired appeal among social media users. All you have to do to utilize the service is upload an image and hit the animate button.

In a matter of seconds, an animated version of the image appears, complete with a moving face, eyes, and mouth, as if it was extracted from Harry Potter’s magical newspaper.

-

Zao

Zao is the most recent app to go viral in China due to its brilliant ability to make deepfake movies in seconds. You can select a video clip from the collection containing sequences from Chinese drama series, The Big Bang Theory, iconic Hollywood films, and more. Zao makes a seemingly realistic deepfake video in a matter of seconds that seems natural and indistinguishable from the original footage. The software takes only a few seconds to train, unlike powerful computers, which can take hours to train the Generative Adversarial Network that creates deepfake video.

-

Wombo

If you haven’t been living under a rock, you’ve probably already seen some Wombo footage. Wombo is a lip-syncing program that allows you to turn yourself or others into singing faces. You may select a song from 15 options and have the figure sing it, all from a single photograph. This app is currently sweeping the globe, displacing Reels and TikTok.

-

FaceApp

FaceApp is a prominent app that was one of the apps to popularize and democratize deepfakes and AI-generated face editing on smartphones. FaceApp allows you to submit a photo of yourself and then see what you’ll look like when you’re old, make yourself smile, and do other things. As previously said, the app employs AI to enhance the photographs’ realism. Not only is this a terrific way to have some fun with your pals, but it’s also perfect if you have vintage images and want the subjects to be smiling instead of sitting with a straight look.

-

Jiggy

Jiggy, on the other hand, takes the procedure a step farther. You may use this program to generate deepfakes of GIFs and insert yourself into any GIF you choose. That’s quite awesome, isn’t it? All you have to do is choose a photo of yourself and the GIF you want to appear in. That’s all there is to it; Jiggy will then use its intelligence to insert your photo in the GIF, complete with motion! It’s a great method to make personalized, customized GIFs that will be much more enjoyable to share.

Is Deepfake Limited to Video Creation?

Deepfakes aren’t only about videos. Deepfake audio is a rapidly expanding field with a wide range of applications.

With just a few hours (or in some cases, minutes) of audio of the person whose voice is being cloned, realistic audio deepfakes can now be created using deep learning algorithms, and once a model of a voice is created, that person can be made to say anything.

Deepfake audio has medical applications in the form of voice replacement and computer game design. In computer game design, programmers may now let in-game characters speak anything in real-time instead of depending on a restricted set of scripts that were recorded.

Spotting Deepfake Videos and Audios

If you find the subject’s lips and jawline frequently show the most symptoms of strangeness, it may be deepfake. It may appear hazy around the edges or as though something isn’t aligned correctly. When you gaze at the face and feel — that unsettling sensation you get when something unnatural seems too eerily human-like — could be pinging. Your brain may be alerting you that something is wrong, but your eyes will need another replay (or two) to figure it out.

Mouth movements are frequently another telltale sign. They may not appear to fit as if the mouth is moving too rapidly or is too slick – which might be due to poor lighting or lip-syncing.

Closely looking at the video, you’ll see lighting issues throughout, particularly around the forehead and cheek areas. The issue is due to the fact that the computer does not always account for variations in illumination that occur when humans turn their heads.

Why are Deepfakes Used Mostly?

Deepfakes now represent the greatest threat to women, with nonconsensual pornography accounting for 96% of deepfakes disseminated on the internet. The majority of deepfakes are used to make fake revenge pornography, although there are rising reports of deepfakes being used to create false revenge pornography.

Deepfakes might expedite bullying in general, whether in schools or a business, because anybody can put individuals in absurd, hazardous, or compromising situations.

Corporations are concerned about the potential role of deepfakes in escalating frauds.

The concern for governments is that deepfakes create a threat to democracy. You can make a politician running for reelection appear in a porn video if you can make a female star appear in one. Scary, isn’t it!

Is Deepfake always Malicious?

Certainly not. Some are amusing, while others are useful. Deepfakes that clone people’s voices can help them regain their voices if they’ve lost them due to illness. Deepfake movies may breathe new life into museums and galleries.

A deepfake of the surrealist painter Salvador Dal greets tourists at the Dal museum in Florida and describes his work while taking pictures with them. Technology may be used in the entertainment business to enhance foreign-language film dubbing and, more controversially, to improve deceased performers. Finding Jack, a Vietnam war film starring the late James Dean, is one such example.

Solution to Deepfake

Ironically, AI might be the solution. Artificial intelligence is already assisting in detecting fraudulent films, but many of the current detection systems have a critical flaw: they perform best for celebrities because they can train on hours of freely available data.

Various technologies are being developed by IT companies for deepfake detection. Another technique focuses on the media’s origin. Although digital watermarks aren’t perfect, a blockchain online ledger system might store a tamper-proof record of films, photographs, and audio, allowing their origins and any alterations to be examined at any time.

Can You Create Deepfakes?

Certainly yes, academic and industry researchers, amateur, visual effects firms, and pornographers are all involved. Governments may also be experimenting with the technology as part of their internet tactics. The tactics can be used to undermine and destroy extremist groups or to reach specific people. As discussed, there are various applications available for everyone to create deepfakes.

Although deepfakes may be created for various genuine or entertainment purposes, we do not encourage creating deepfakes for any purpose that may harm any individual.

Read: How AI is Changing Social Media in 2021

Conclusion

You must have heard of the saying, “Don’t trust everything you see, even salt looks like sugar!”

I would like to make a small change, and that is, “Don’t trust everything you see because salt looks like salt, but it may not be!”